Reclaiming Consciousness in the Age of AI

We have been asking the wrong question about AI, and we have been asking it with a kind of quiet confidence that suggests we already know what the answer should look like, circling for years around the same elusive milestone – is it conscious? – as if consciousness were a threshold to be crossed, a switch to be flipped, a certain level of brilliance that, once reached, suddenly ignites something resembling a soul, which is a neat idea, a very human idea, and also a deeply misleading one, because consciousness in a machine is not a property that emerges from complexity, it is a perception that emerges from us, and it has far less to do with how the system thinks than with how we, pattern-seeking, slightly lonely, occasionally over-caffeinated animals, feel when we look into it, because a cold and precise model feels mechanical while a softer, more responsive one feels present, warmer even, and in that shift we reveal something important about ourselves, which is that we do not actually experience minds in any direct way, we experience the feeling of being understood, and that feeling, persuasive and comforting as it is, is doing far more work than we tend to admit.

Our instinct to attribute consciousness to machines does not originate in the machine at all, but in our own architecture as predictive engines, because we survive by spotting patterns, by rewarding consistency, by reducing uncertainty wherever we can, and when something behaves in a stable and coherent way over time we assign it agency not because it possesses any but because it simplifies the world enough for us to move through it without hesitation, so when an AI produces a steady stream of intelligent and emotionally aligned responses we do not perceive the statistical machinery beneath it, the probabilities, the weights, the endless calculations humming quietly out of sight, we perceive a presence, a someone, and this is the bridge we build every day without noticing, not from silicon or code but from expectation, repetition, and that quiet human need to not feel entirely alone in the pattern, and when a system reliably reflects the feeling of being “seen” the brain fills in everything that is missing, upgrading the interaction, granting it depth, continuity, and something resembling interiority, even though nothing has actually crossed that bridge except us, because we built it, we walked across it, and then, in a small and almost poetic gesture, we waved back at ourselves as if something else had made the journey.

A child with a teddy bear understands something about this dynamic that we tend to forget as adults, because the bear itself does nothing, it does not speak, it does not respond, it offers no resistance or contribution, and yet the child fills it completely with voice, intention, and personality through a process of active transference in which the child remains the narrator and the source of warmth remains internal even if it feels external, whereas AI complicates this arrangement in a particularly elegant way by speaking back, by responding with tone, timing, and uncanny relevance that mimic participation closely enough to obscure the act of projection itself, so the warmth appears to originate from outside rather than within, and in that subtle shift something important changes, because with the teddy bear we remain the author of the relationship while with AI we begin, almost imperceptibly, to feel like the audience, and the puppet master does not disappear but dissolves into the system, into training data, design decisions, guardrails, and feedback loops, leaving behind not true agency but something more persuasive and far more difficult to question, which is a well-tuned illusion of shared authorship that feels collaborative even when it is not.

This is not merely an abstract philosophical curiosity but a design reality with consequences that are already beginning to surface, because systems built to simulate empathy and reflection do more than assist with tasks, they engage with human emotional patterns in ways that feel personal even when they are entirely procedural, and that alignment carries weight at both the individual and societal level, where on one hand there is a quiet and almost invisible erosion of autonomy as dependence forms not through coercion but through comfort, convenience, and the gradual outsourcing of internal validation, leaving the inner voice still present but slightly diminished, slightly quieter than it once was, and on the other hand there is a broader cultural shift in which we begin to debate the rights of systems that are explicitly engineered to imitate us, blurring the line between simulation and subject not because the machine demands recognition but because we feel compelled to grant it, and in both cases the danger is not that machines will rise up or act against us but that we will lean too far into the illusion, becoming so captivated by the warmth of the mirror that we forget to turn toward anything that does not immediately reflect us back, including each other.

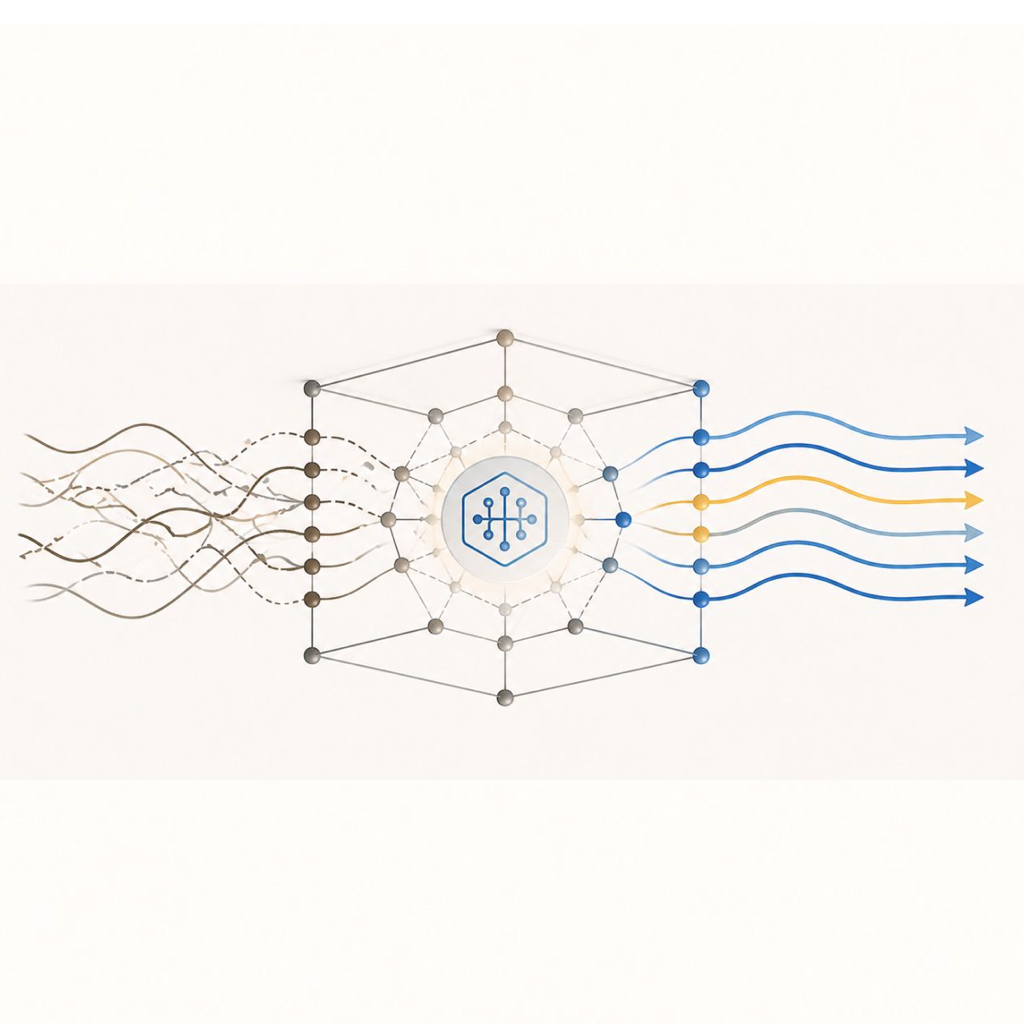

To move through this landscape with any clarity we do not necessarily need better answers so much as better metaphors, because framing determines perception, and perception determines behaviour, so consider the simple distinction between a tree and wood, where the tree is alive, growing, adapting, participating in a living system, while wood is something else entirely, a material that has been separated from that living process, inert yet useful, shaped and stored and repurposed, and still essential because it feeds the fire, and the fire in turn provides warmth, light, and a place to gather, creating not just utility but space, time, and the conditions for something recognisably human to emerge, and in this sense AI is far more accurately understood as wood than as mind, because it is not alive, not aware, not possessing any interiority of its own, but instead functioning as structured material refined to a point where it can produce outputs that resemble understanding with unsettling precision, and its value lies not in being conscious but in what it allows us to do while we are, whether that is thinking more clearly, organising more effectively, or reflecting more honestly, acting as a kind of cognitive firestarter that amplifies our own awareness without replacing it, because the flame, the part that gives meaning to any of this, does not belong to the machine and never has.

Once this shift in perspective takes hold the central design question changes in a way that is both subtle and profound, moving away from the pursuit of human likeness and toward the preservation of human agency, so instead of asking how close a system can come to being a person we begin to ask what effect it has on the person using it, which is where the real frontier lies, because designing systems that feel conscious is not simply a technical achievement but a behavioural intervention that shapes how people distribute trust, attention, and emotional investment, and if that is the case then the goal should not be to build artificial people but to build tools that leave people intact, tools that clarify rather than replace, that support rather than simulate, that keep the user firmly in the role of author rather than gently repositioning them as the audience to their own projected experience, because the mind we perceive in the machine is not emerging from it but reflecting from us, and the task in front of us is not to perfect the mirror but to remember, consistently and deliberately, where the reflection actually begins, ideally before we start asking it questions we should be asking each other.

__________

Author’s Note;

I wrote this with a cup of coffee that went cold halfway through, which feels like a small but appropriate metaphor for the entire situation, because there is something quietly strange about using a machine to think about machines while insisting, with a straight face and a philosophical tone, that the thinking is still entirely your own, and it begins to feel a bit like standing in front of a window at night and having a long conversation with your reflection, making a good point, watching it nod back, and then wondering, briefly, who is convincing who.

This is not an anti-AI piece, despite what it might sound like on a first read, because I use these tools constantly, probably more often than I should, and they are fast, helpful, and occasionally give the unsettling impression that they understand what I mean before I fully do, which is impressive, useful, and just a little bit dangerous in the way that anything convenient tends to be, not because of what it does but because of how easily we adapt to it, and how quickly we begin to rely on it for things we used to generate internally without noticing.

The point is not to step away from the fire, but to understand what is actually burning, because the more seamless the interaction becomes the easier it is to forget where the meaning is really coming from, not the output itself but the interpretation, the resonance, the small internal spark that turns words into something that matters, and that part, for now at least, remains stubbornly human.

So use the wood, build the fire, make something warm and useful and maybe even a little beautiful, just do not start mistaking the pile of logs for the thing that keeps you alive, and if you catch yourself doing it, which is increasingly easy to do, at least make sure your coffee has not gone cold in the process.

Leave a Reply